Did you know that over ninety percent of the information on the internet stays hidden from the search engines you use every day? While standard browsers look at the surface, onion search engines have to use much more complex methods to find and list sites – these specialized tools are the only way to find your way through the dark web without getting lost in a sea of broken links and empty pages. If you are curious about how these systems stay updated in an environment that changes every hour, you are in the right place.

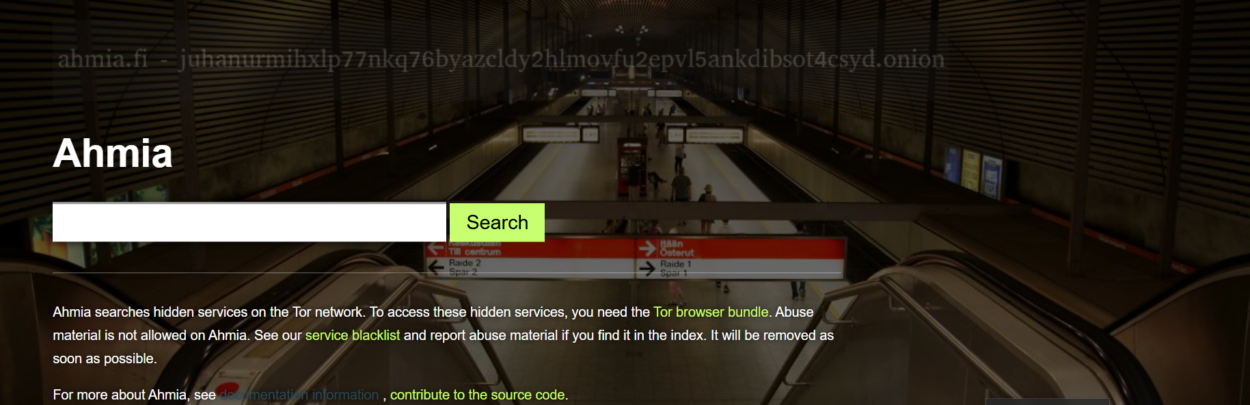

The area of anonymous browsing is much more organized today than it was just a few years ago. In 2026, search tools are no longer just lists of random links – they are sophisticated databases that prioritize uptime and content quality. You might notice that when you look for something specific, the results feel more relevant and less like a gamble, because the backend technology has evolved to handle the unique quirks of the Tor network.

The Mechanics of Deep Web Crawling

Crawling the dark web is not as simple as following a trail of breadcrumbs. On the surface web, sites link to each other constantly, making it easy for bots to jump from one page to another. Onion services often exist in isolation. To solve this, modern search engines use distributed nodes that act like digital scouts, constantly pinging known addresses to see if they are still active – this helps maintain a fresh index for anyone trying to find reliable entry points.

These scouts do more than just check if a site is online – They look at the headers and the structure of the page to figure out what it is about. Because onion addresses are just long strings of random characters, the search engine must rely entirely on the content it finds inside. You can think of it like a library where all the books have blank covers – the librarian has to open every single one to know which shelf it belongs on.

- Active Pinging

Systems check site status every few minutes to remove dead links. - Content Scrapers

Specialized scripts read the text to determine the site’s purpose. - Recursive Discovery

Bots find new links mentioned within existing directories.

Obstacles in Categorizing Hidden Data

One of the biggest hurdles you face when building a dark web index is the lack of metadata. Many surface websites use tags that tell Google exactly what they are. On the Tor network, site owners often skip these steps to stay more private. Search engines in 2026 use machine learning to look at patterns in language and image types to group sites into categories like “Forums” “Privacy Tools” or “Financial Services”

Speed is another major issue that the platforms have to manage. The Tor network moves data through multiple layers of encryption, which makes the crawling process slow. If a search engine tries to move too fast, it might crash the very site it is trying to index – this is why you sometimes see a delay between a new site launching and it appearing in your favorite search results. Developers have to balance the need for speed with the technical limits of the network.

Reliability is the final piece of the puzzle – Because hidden services can disappear forever at the push of a button, the index is always in a state of flux. To help users find what they need, many platforms now offer a deeper explanation of anonymous browsing tools that show which sites have the longest history of staying online – this historical data is vital for anyone who needs consistent access to specific resources.

Verification & Safety Standards in 2026

Safety is likely your top priority when you venture into the dark web. In the past, search results were often filled with clones or malicious mirrors designed to steal data. The best search engines use verification systems. They look for digital signatures that prove a site is the official version and not a fake – this layer of protection makes the experience much safer for the average person.

Some engines go a step further – using community feedback. If a site starts behaving badly or redirects users to a scam, the community can flag it. The search engine then moves that link down in the rankings or removes it entirely – this democratic way of policing the web helps keep the environment cleaner. As an example, if you are looking for a specific directory, checking an overview of Tor network systems can show you which gateways are currently considered the most secure by the community.

- Signature Matching

Verifying that the onion address matches the developer’s public key. - Blacklist Syncing

Sharing data with other privacy groups to block known malicious actors. - Uptime Ranking

Giving higher priority to sites that have been stable for months.

How Modern Interfaces Help You Navigate

If you used the dark web ten years ago, you probably remember how ugly and difficult the search engines were. They looked like something from the nineties. The interfaces are clean and easy to use. They offer filters for language, site age and even “safety scores” These features are there to help you make informed decisions about where you click.

Developers are also focusing more on helping users understand the “why” behind the results. Instead of just a list of links, you often get a short snippet of text that explains what the site does – this transparency is a big step forward. It reduces the need for you to click on every single link just to find one piece of information. By providing a secure internet navigation concept through better design, these engines act more like mentors than just simple directories.

The goal for the future is to make the dark web as searchable as the surface web, without giving up any of the privacy that makes it special. As more people look for ways to escape data tracking, these onion search engines will become even more important. They are the gatekeepers of a more private version of the internet, ensuring that information remains accessible to everyone, regardless of where they live or what their situation is.

FAQ

Are onion search engines legal to use?

Yes, using a search engine to find links on the Tor network is generally legal in most places. The search engine itself is just a tool for finding information. The legality of the content you choose to view or the actions you take after clicking a link depends on your local laws.

Why do some links in the search results fail to load?

Hidden services are hosted on private servers that are often less stable than professional data centers. A site might be down for maintenance or the owner may have turned off the server. If a link does not work, try again in a few hours or look for a mirror link in the search results.

Do the search engines track my search history?

Many reputable onion search engines are built with a “no-logs” policy. They do not save your IP address or your search queries because that would go against the whole point of using the Tor network. Always check the “About” or “Privacy” page of the engine to see how they handle your data.

How can I tell if a result is a scam?

Look for verification badges or high “trust scores” if the search engine provides them. You should also be careful if a site asks for personal information or payment immediately. Using a well known directory to cross reference links is a smart way to stay safe.

Sign up